The complete guide to A/B testing for pricing

Pricing remains one of the most powerful surfaces for experimentation.

But pricing experiments raise the stakes in ways other experiments typically don’t. Changes in pricing can influence customer trust, perceived fairness, and brand positioning. Dependencies between billing, sales, and finance also make pricing tests harder than expected. And, unlike many product experiments, pricing tests must comply with legal and regulatory rules governing how prices are displayed, communicated, and differentiated across users.

Complexity compounds at the organizational level. Organizations in industries such as healthcare, airlines, automotive, banking, and telecommunications often manage multiple pricing models at once (such as fixed prices, subscription tiers, and negotiated contracts), so no single approach applies everywhere.

Despite this, a few underlying principles help explain how pricing experiments tend to work across different contexts. These principles are a useful starting point.

Principles shaping pricing experimentation

1. Pricing experimentation generally falls into three broad environments:

- Transactional commerce environments: These are high-frequency settings where prices are public, purchase decisions are immediate, and the sheer volume of transactions means experiments generate statistically meaningful results faster than almost any other environment.

- Subscription and self-serve environments: Subscription models introduce time as a variable. Because customers move through acquisition, activation, and retention in observable stages, pricing experiments can be designed to test more than just conversion. They can reveal how price shapes long-term behavior, expansion, and churn. This lifecycle visibility is a structural advantage, enabling teams to run experiments that inform not just what to charge, but when and how pricing should evolve across the customer relationship.

- Negotiated and relationship-mediated environments: Where prices are set through conversation rather than a checkout flow, traditional A/B testing does not apply directly. Experimentation here is comparative and cumulative. Insights emerge from structured variation across proposals, deals, and sales interactions over time. The discipline required is different: less about statistical significance at scale, more about building the infrastructure to learn systematically from every engagement.

In practice, most organizations operate across more than one of these environments simultaneously. For example:

Understanding which applies, and where, is the key to designing experiments that are both feasible and meaningful.

2. Different pricing environments require different experimentation approaches.

Transactional commerce often supports randomized A/B tests (because of its transaction volume), subscription businesses must evaluate pricing changes across the customer lifecycle, and negotiated sales environments rely on learning across deals rather than classical A/B testing.

3. Operational constraints shape pricing experimentation as much as statistical design.

Billing infrastructure, regulatory requirements, competitive visibility, and internal margin guardrails frequently determine which pricing variations can realistically be tested.

4. Pricing experiments influence more than conversion metrics.

Changes in pricing can affect customer perception, brand positioning, and competitive dynamics, making it important to evaluate experiments within a broader commercial context. For example, when customers can easily compare prices across channels, cohorts, or competitors, tolerance for pricing variation decreases. This influences both experiment design and exposure strategy, as perception effects may extend beyond measured outcomes.

5. Pricing experiments often involve more than changing the price itself.

Teams frequently experiment with discount structures, plan design, feature boundaries, shipping thresholds, or proposal framing to understand how pricing influences customer decisions and perceived value.

Here’s how these principles show up in practice across different pricing environments.

A/B testing for pricing in transactional commerce environments

Transactional commerce environments are characterized by high transaction frequency, episodic purchase behavior, and externally visible pricing.

High purchase volume creates strong statistical opportunities for experimentation, while public price exposure introduces competitive and customer perception dynamics that shape how pricing variation can be introduced.

eCommerce platforms are a great example in this setting, but similar dynamics appear across marketplaces, retail, travel booking, and app-based consumer services. In these environments, A/B testing for pricing experimentation often happens through promotional mechanics, threshold design, and contextual incentives rather than persistent base price changes.

What A/B testing for pricing typically looks like here:

- List price variation. Adjusting base price points tests willingness to pay directly, while often needing to stay aligned with competitive benchmarks.

- Discount depth decisions. Varying discount levels tests price sensitivity while highlighting trade-offs between increased conversions and margin impact.

- Coupon exposure strategies. Selectively showing coupons acts as an experiment on perceived deal value and purchase acceleration.

- Shipping threshold placement. Free shipping qualification levels act as pricing experiments that influence order value while shaping perceived transaction value.

- Bundle configuration choices. Combining products into bundles allows for testing perceived value and purchase likelihood.

- Volume discount ladders. Graduated discount structures help find out if higher price incentives encourage customers to purchase more.

- Membership and subscription commerce models. Recurring benefit constructs are experiments on loyalty economics and repeat purchase behavior.

- Promotional cadence decisions. Varying frequency and timing of promotions helps gauge anticipation dynamics and purchase urgency.

A few key considerations:

These considerations highlight how A/B testing for pricing in transactional commerce works within a broader commercial system rather than a purely controlled experimental surface. Success therefore depends on balancing analytical learning with commercial awareness, ensuring experimentation informs pricing without destabilizing marketplace dynamics.

{{blue-block-1}}

Interpreting results in transactional pricing experiments

Interpreting results here requires considering several real-world constraints:

Competitors may introduce similar promotions during the experiment period, making it harder to isolate the impact of the pricing presentation. Inventory constraints can also influence outcomes. If discounted products sell out quickly, later visitors may encounter different prices or availability regardless of the experimental variant.

Customers may also encounter different pricing messages across channels, such as seeing one variant on mobile and another on desktop before completing their purchase. In addition, overlapping promotions (such as coupons, cashback offers, or limited-time sales events) can blur the true driver of purchase behavior.

As a result, pricing experiments in transactional commerce environments often require careful coordination with merchandising and promotional planning to ensure results reflect meaningful pricing insights rather than temporary market dynamics.

A/B testing for pricing in subscription and self-serve environments

Subscription and self-serve environments typically involve automated purchase journeys, ongoing payments for continued access, and long-term customer relationships.

These conditions allow teams to vary pricing experiences within digital purchase journeys and observe how those differences influence behavior over time.

SaaS solutions are one example, but similar dynamics appear across digital memberships, subscription media, and usage-based platforms. Within these environments, pricing decisions rarely exist in isolation. Pricing is intertwined with plan design (how offerings are structured across tiers), value differentiation (how features, limits, and entitlements define perceived value), and lifecycle experience (how customers enter, upgrade, and renew over time).

What A/B testing for pricing looks like here:

- Funnel-structure decisions. Free trial versus freemium entry function as pricing-adjacent experiments where downstream activation and retention become primary signals.

- Plan price variation across tiers. Adjusting price points across plans tests willingness to pay while revealing how customers interpret tier differences.

- Value boundary shifts. Moving features across plans acts as a pricing-adjacent experiment that reshapes perceived value without changing the underlying price.

- Discount exposure decisions. Varying when and to whom discounts are shown reveals elasticity patterns while highlighting how contextual timing influences purchase intent.

- Billing cadence choices. Monthly versus annual structures work as pricing experiments that trade adoption friction against commitment incentives and how quickly revenue is realized.

- Usage threshold placement. Adjusting where consumption-based pricing inflection points occur acts as an experiment on perceived fairness and upgrade readiness.

- Expansion pricing mechanics. These are decisions around seats, add-ons, or overages that act as experiments on how upgrades translate into incremental revenue.

A few key considerations:

In all, pricing experimentation in subscription and self-serve environments extends beyond isolated price optimization toward lifecycle-aware monetization learning. Success needs balancing early acquisition signals with longer-term retention and growth dynamics, ensuring experimentation informs monetization strategy without over-indexing on short-term outcomes.

{{blue-block-2}}

A/B testing for pricing in negotiated and relationship-mediated environments

Negotiated and relationship-mediated environments are characterized by human-mediated purchase journeys, lower transaction frequency, and pricing decisions shaped through ongoing client relationships. Conversions happen through conversations, proposals, and evolving scope rather than standardized purchase flows.

In these environments, randomized A/B testing for pricing is often less feasible. Instead, organizations explore pricing through intentional variation in proposals, pricing models, and negotiation approaches, generating comparative learning across engagements.

Consulting, agency, and professional services organizations are common examples of this setting, though similar dynamics appear across enterprise sales, B2B partnerships, and contract-driven engagements.

What pricing experimentation looks like here:

Organizations test pricing across multiple levers here, including pricing models, scope-to-price balance, anchor placement (the initial price reference introduced in a proposal), concession patterns (how and when discounts or flexibility are introduced during negotiation), and proposal positioning (how the proposal communicates value, outcomes, and price justification). At the same time, testers are observing how these differences influence negotiation dynamics and deal outcomes.

Insights emerge through comparison across engagements rather than controlled experimentation or A/B tests.

For example, as clients increasingly expect agencies to use AI-driven efficiencies, organizations may experiment with how those capabilities show up in pricing and proposals. Some reduce prices, others keep prices the same but expand scope, while others position AI as enabling faster delivery or higher productivity. Comparing outcomes across these approaches helps teams understand how AI changes client expectations and pricing acceptance.

While not randomized, these patterns resemble structured experimentation across comparable opportunities, where different commercial narratives are intentionally explored and learning accumulates through synthesis over time. It’s about structured learning across interactions.

A few key considerations (pricing experimentation isn’t classical A/B testing here):

Learning here unfolds across engagements rather than through conventional controlled experimentation structures. Success therefore depends on intentionally capturing insights across opportunities, enabling teams to refine pricing approaches over time.

Alongside these methodological realities, pricing experimentation must also operate within regulatory frameworks that shape how pricing variation can be introduced across markets.

Legal and regulatory considerations in A/B testing for pricing

Several regulatory domains shape how A/B testing for pricing can be designed and executed in practice, including:

- Consumer protection and misleading pricing: Experiments should avoid inflated reference prices, inconsistent savings claims, or urgency messaging that could mislead customers about value.

- Differential pricing and fairness considerations: While pricing variation is expected, segmentation logic should be reviewed to ensure experiments do not create problematic or unjustified differential treatment.

- Subscription transparency requirements: Tests involving trials, renewals, or billing changes must maintain clear disclosure of pricing commitments and cancellation pathways.

- Competition and market dynamics: Visible pricing experiments may influence competitor behavior or raise concerns if variation appears exclusionary, coordinated, or strategically signaling.

- Sector-specific pricing regulation: In regulated industries, pricing tests may need alignment with reimbursement rules, fee disclosure obligations, or tariff frameworks.

- Personalization and data use constraints: Pricing variation informed by user data should account for consent, profiling transparency, and automated decision-making expectations.

Ultimately, pricing experimentation must balance learning with transparency, fairness, and regulatory awareness.

How will you A/B test your pricing?

Pricing experimentation changes how organizations approach pricing. Organizations that experiment with pricing move beyond pricing debates and gain a clearer understanding of how their offerings, pricing, and customer value align.

{{cta-block}}

An example:

Consider an online retailer running a pricing experiment to understand how discount framing influences customer perception of price.

Hypothesis: Presenting the same discount as a percentage versus an absolute savings amount could change how customers perceive the value of the offer and influence purchase behavior.

Experiment setup: To test this hypothesis, visitors viewing a product page are randomly assigned to one of two variants. In the control experience, the discount is displayed as a percentage (for example, “X% off”). In the treatment experience, the same discount is presented as an absolute savings amount.

The goal of the pricing experiment isn’t to change the underlying price. Instead, the team wants to understand whether different pricing presentations influence purchase conversion and revenue per visitor.

Primary metrics include purchase conversion and revenue per visitor, while secondary metrics track add-to-cart rate, checkout completion, and average order value.

An example:

Consider a SaaS platform experimenting with its pricing.

Hypothesis: Introducing a higher-priced enterprise tier could influence how customers perceive the other pricing tiers by creating a stronger price anchor.

Experiment setup: To test this hypothesis, new visitors are randomly shown one of two pricing page variants. In the control experience, customers see the existing pricing plans. In the treatment experience, an additional high-tier enterprise plan is introduced above the existing plans.

The goal isn’t necessarily to sell the enterprise plan itself. Instead, the team wants to understand whether the presence of a higher anchor shifts more customers toward the mid-tier plan and increases overall revenue per signup.

Primary metrics include signup conversion, plan selection distribution, and revenue per signup, while secondary metrics track downstream activation, upgrades, and retention.

Interpreting results in subscription pricing experiments

However, interpreting the results requires looking beyond initial conversion. Pricing changes in subscription businesses often influence downstream behavior such as upgrades, retention, and long-term revenue. The introduction of a new tier may also reshape how customers interpret the value boundaries between plans, making it difficult to isolate whether changes in conversion are driven by pricing itself or by how the overall offering is structured.

As a result, experimentation teams frequently evaluate these tests across longer time horizons rather than relying solely on immediate signup metrics. They may also monitor how existing customers respond to the updated pricing structure, since different cohorts may continue experiencing different plan configurations long after the experiment ends.

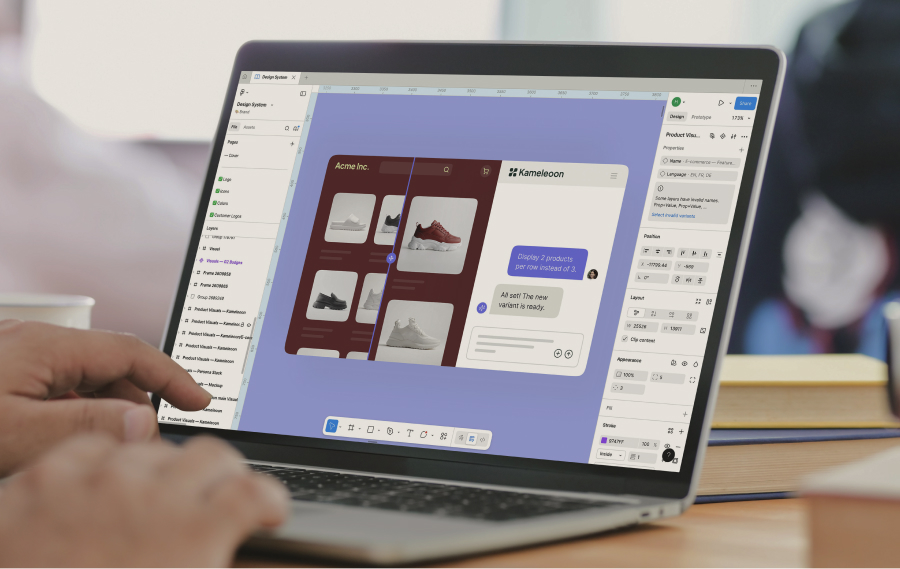

Discover how Kameleoon helps teams test pricing with confidence and learn faster.

Discover how Kameleoon helps teams test pricing with confidence and learn faster.