How do I coordinate the experimentation workflow across multiple teams?

This interview is part of Kameleoon's Expert FAQs series, where we interview leaders in data-driven CX optimization and experimentation. Alex Harris is an expert in lead generation, conversion rate optimization, and website personalization. Since 2000, he has created thousands of landing pages and performed over 7,000 different A/B tests.

How do I coordinate the experimentation workflow across multiple teams?

Establishing a workflow across multiple teams takes a lot of work that includes:

- Creating a COE (Center Of Excellence) or stakeholder governance framework.

- Introducing change management for teams with shared goals, based on product ownership.

- Creating standard operating procedures that provide guardrails and best practices for every stage of the experimentation workflow. These stages include research, ideation, pre/post analysis, development, deployment, QA, and feature management.

Once product stakeholders and champions establish this core framework, other practitioners can then be introduced to the framework.

Each new team will create its own version of this ‘Experimentation System’ and adapt it to their OKRs, development workflow, programming language specifics, and other needs based on whether they are doing client-side and/or server-side experimentation.

Each team will have set program goals and KPIs to measure that roll-up to the corporate business Northstar goals.

What potential problems might I run into when managing the work of multiple teams in a global business?

The larger the teams, the more sites/products/development requirements are needed and uniquely tailored to how a company is set up.

Enterprise organizations often have many websites and digital destinations, including international sites, portals, mobile apps, and retail locations or kiosks.

The most common challenges I see occurring when people are managing multiple global teams are:

- Poor communication. In larger companies, teams can operate in silos, never talking to one another. There needs to be unity to have a core streamlined experimentation program. The most common example is misalignment between marketing and IT/development teams.

- Not engaging all stakeholders. Don’t forget to involve your external agencies within the Experimentation System to ensure everyone uses the same playbook.

- Technical clashes. If multiple teams are trying to solve similar issues, they must work together to create a reusable solution and coordinate implementation.

- Use of different tools and systems. Global teams often use different systems and project management software from one another. This results in a lack of clarity about what’s being worked on and duplicated work.

What rituals or processes are needed to strengthen coordination among my teams?

As a baseline, you need to get different teams to buy into the new experimentation mindset and leverage a governance model to ensure teams are empowered with the right skills and tools to run their Experimentation System.

Once that’s established, there are a few ways to strengthen coordination and get consistent traction to scaling an experimentation team.

It starts with making the experimentation champions and product owners stand out and helping them get winning results and key insights that improve business KPIs.

Another way is to improve how different teams can submit test ideas or add ideas for future ideation. Getting more groups to submit ideas and then measuring the outcomes of their work helps to empower them while also giving recognition to practitioners doing the work.

It helps to show how experimentation aligns with overall business success.

Who do I assign decision-making responsibilities to when multiple teams work on the same digital product?

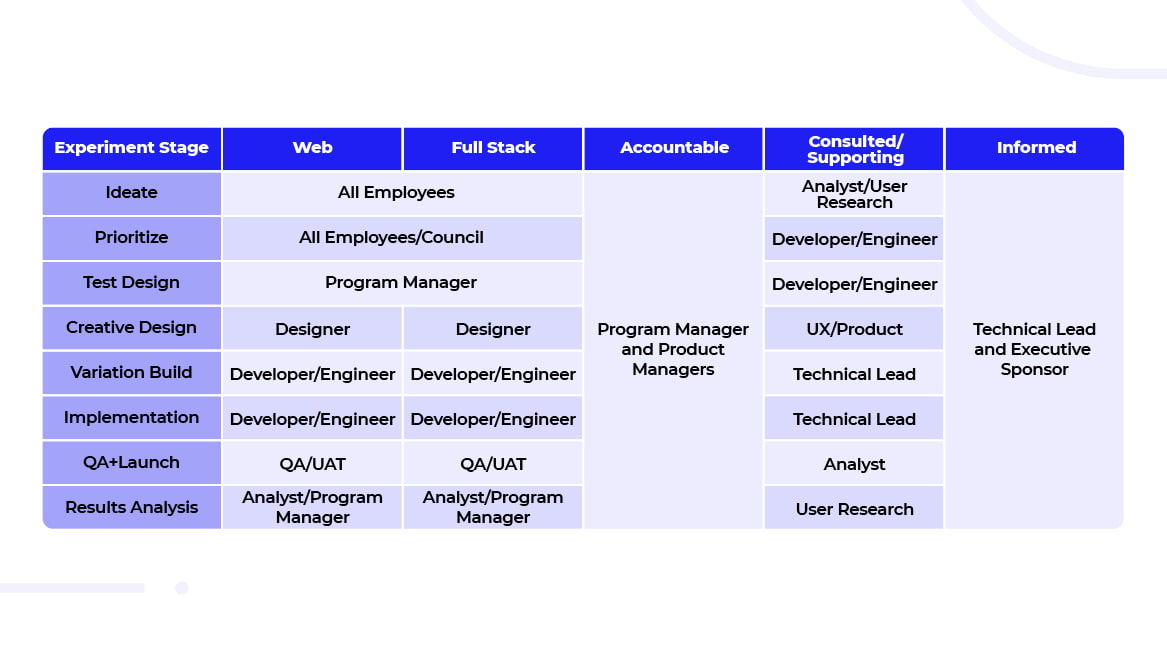

Decisions (and other experimentation responsibilities) are assigned using a RASCI for each team. A RASCI is a responsibility assignment matrix that sets out who’s Responsible, Accountable, Supporting, Consulted, and Informed for various topics.

Even when multiple teams work on the same product, they may work in different roles. Each experimentation team should be assigned either a Project Owner, Publisher, Editor, Viewer, or QA role.

You can then limit specific collaborator roles in your testing tool and project management software to ensure the right person has the correct access, depending on their level/role.

The Project Owner and Product Owner are responsible for ensuring all teams collaborate towards similar goals and OKRs.

How do I decide what tech stack to implement for testing workflow management?

We have a Martech evaluation framework that helps evaluate how enterprises manage projects, execute workflows, run marketing experiments, and oversee feature management.

This framework evaluates what software they use, their experience or preferences, and how the technology and integrations work together. While we make recommendations, the final decisions come down to the specifics of the business and the skills needed from internal staff.

For example, suppose the organization utilizes a certain suite of products. The framework helps us to identify which CDP, DAM, CMS, or marketing intelligence platform is best to meet their business objectives.

This takes into account how data orchestration is established and which integrations will work best. In analytics, while many customers will utilize Google Analytics, a SAAS or subscription service might work better with HEAP to improve customer behavior analytics.

Alex, we’ve heard you are quite the skateboarder. How has skateboarding helped you become better at experimentation?

I used to be an avid skateboarder, but now I can’t risk breaking any bones, especially my wrists. I mostly mountain bike, ride an electric skateboard and do offshore fishing.

Just doing a simple jump (known as an ollie) is hard. As a young boy, it took me months, if not years, to master the ollie. Then I did, and I leveled up by learning how to do a kickflip. You never stop trying new things and trying to make yourself better.

In experimentation, after you learn to uncover insights, you need to know how to develop the right experiment or ensure the data and analytics are correct. It is a constant learning process.

After 20 years of doing Conversion Rate Optimization, I am still learning, from the basics to new tricks like feature experimentation and personalization.