What is group sequential testing?

Traditional experimentation and A/B testing worked with a simple rule: Don’t peek.

If you looked at results before an experiment reached its planned sample size, you risked inflating false positives and making the wrong call. So teams were told to wait, analyze once, and decide at the end.

Modern experimentation works differently. Mid-test analyses are common; even necessary. But both extremes carry cost. Waiting unnecessarily delays learning, slows growth, and increases opportunity cost. Stopping prematurely risks acting on noise, scaling false positives, and eroding trust in experimentation.

Group sequential testing sits between these two extremes, allowing data to be reviewed while an experiment is still running but without sacrificing statistical rigor. Often viewed as the simplest form of adaptive design, group sequential approaches enable principled early stopping while maintaining frequentist error control.

What is group sequential testing?

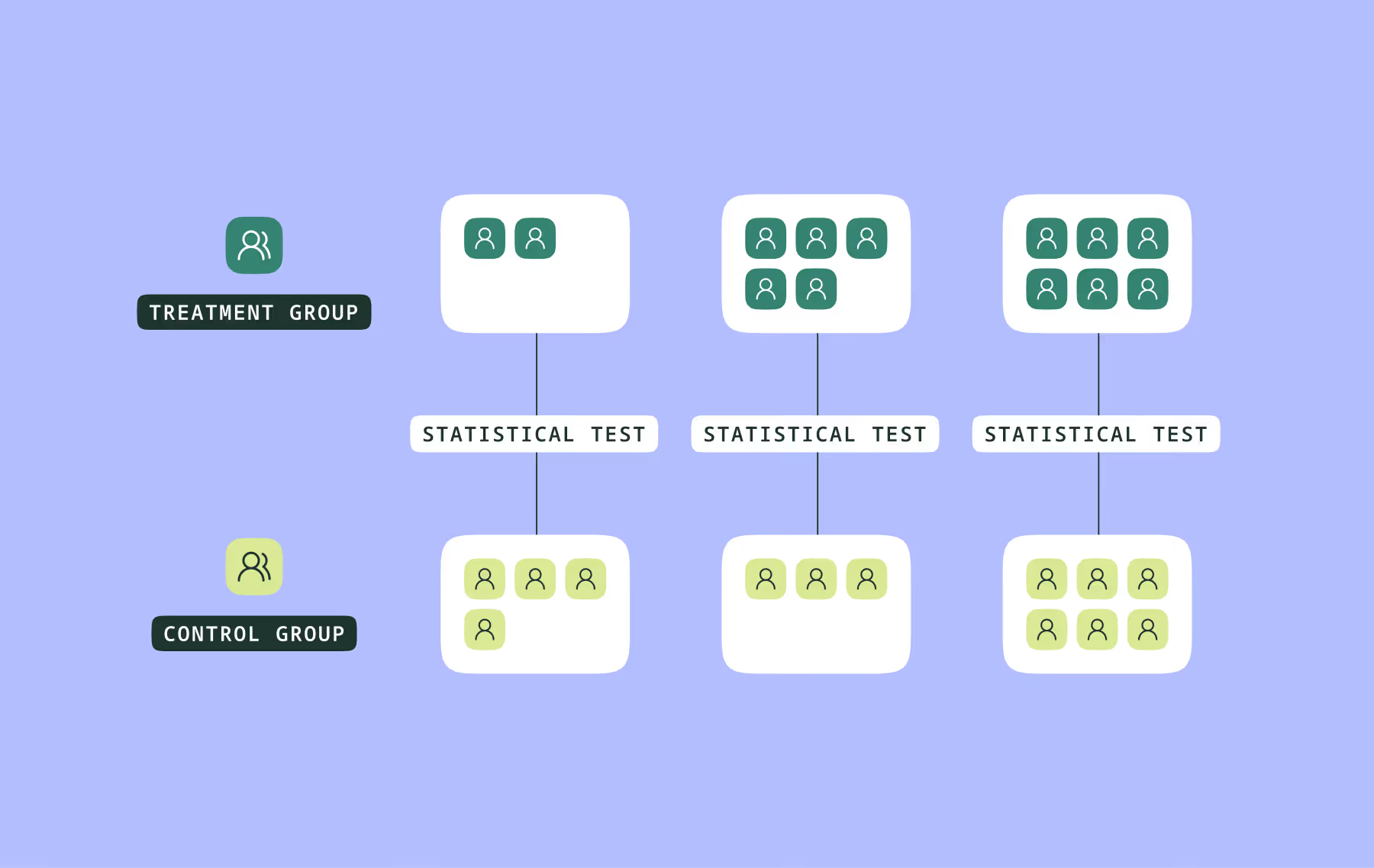

In group sequential testing, instead of analyzing experiment data only once at the end (as in a fixed-sample design) or continuously monitoring results after every observation (as in fully sequential testing), experiment data is analyzed at multiple pre-planned interim analyses as it accumulates.

The “group” in group sequential testing refers to the batch of observations collected between these interim analyses. This is staged evaluation built into the experiment design.

{{pink-block-1}}

Group sequential testing vs fully sequential testing

Fully sequential testing sits at the opposite end of the design spectrum. Rather than scheduling interim analyses for groups of observations, a running test statistic is monitored after every observation, and the experiment stops when it crosses a pre-specified boundary.

In our healthcare example, this would mean updating the evidence continuously as each additional patient session occurs, rather than waiting for structured interim looks.

Importantly, this is not the same as informally checking a p-value and stopping when results appear favorable. In fully sequential testing, stopping boundaries are mathematically derived in advance to account for continuous monitoring. They are constructed so that the overall probability of a false positive across the entire sequential process remains within the target error rate.

In this design, the stopping rule is embedded directly into the experimental framework, and sample size is determined by the evolving evidence rather than fixed in advance. In theory, this means no predetermined maximum sample size is required, as the experiment concludes once sufficient evidence accumulates.

In practice, however, fully sequential designs can introduce operational complexity:

- Continuous monitoring requires infrastructure capable of near real-time updates.

- Boundary calculations are more involved than in fixed-sample or group sequential designs.

- Interim estimates remain volatile until a stopping boundary is reached. Early engagement signals may fluctuate as different patient cohorts move through the redesigned coverage explanation.

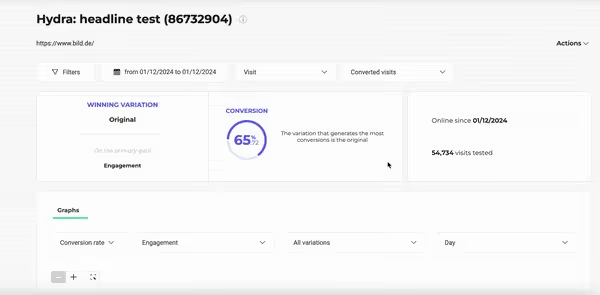

Modern experimentation platforms generally handle this complexity behind the scenes. Solutions such as Kameleoon, for example, continuously compute the running test statistic, apply stopping boundaries, and surface signals as evidence approaches decision thresholds.

Here’s Kameleoon powering a fully sequential test:

With Kameleoon, you could even set up alerts to get notified as experiments move toward potential decision points.

While fully sequential testing enables continuous monitoring, many teams adopt group sequential testing to balance statistical efficiency with operational simplicity. This balance has direct implications for how experiments are planned.

How experiment planning changes with group sequential testing

Designing a group sequential experiment requires more upfront specification than traditional fixed-sample testing, yet remains operationally simpler than fully sequential testing, which evaluates incoming data continuously. Because interim decision-making is built into the design, adaptability must be engineered into the experiment from the outset. Here is how the design work changes.

{{blue-block-1}}

Who sees interim results can matter more than it appears. Even directional signals can influence how teams interpret or support the redesign, subtly shaping the data that follows. Managing access to interim results can therefore serve as a safeguard for experimental validity.

Group sequential testing and the shift to adaptive experimentation

Group sequential testing sits naturally within the broader move toward adaptive experimentation. It introduces flexibility into experimentation without abandoning structure, allowing decisions to evolve as evidence accumulates rather than waiting for a single “final” analysis.

When implemented thoughtfully, this approach can shorten time to decision, reduce the amount of data required to reach confident conclusions, and increase trust in the outcomes organizations act upon.

The deeper shift, however, is organizational. You move from running experiments as isolated events to designing controlled decision systems. Interim analyses are no longer informal check-ins but planned evaluation moments, and stopping is no longer reactive but built into the design itself.

{{cta-block}}

1. Maximum sample size and interim timing must be pre-specified

You still calculate a maximum required sample size based on effect size, variance, and desired power.

But you must also specify:

- The exact number of interim looks

- When those looks will occur, either by participant count or by information fraction (the proportion of total planned data collected so far)

These interim analyses can’t be chosen after seeing partial results. They are part of the experiment design itself. Unlike fixed-sample testing, you are not only planning how much data you need but also planning when you are allowed to reconsider the decision.

2. The number of interim analyses influences the required sample size

Introducing interim looks typically requires a modest inflation in the maximum sample size compared to a fixed-sample design, particularly when using conservative boundary families. This ensures that overall power at the maximum sample size is preserved despite stricter early decision thresholds.

You are effectively paying a small sample-size cost in exchange for the option to stop early.

That tradeoff must be considered during planning.

3. Stopping boundaries must be chosen and pre-committed

You must select a boundary framework before the experiment begins — whether O'Brien–Fleming, Pocock, or a more general alpha-spending approach.

This choice determines how the decision thresholds evolve across interim looks.

Once the experiment starts, that choice is locked in. Changing boundary rules mid-experiment invalidates the design. The integrity of Group Sequential Testing rests entirely on this pre-commitment.

4. Futility stopping (if used) must also be pre-specified

Group sequential designs can allow early stopping not only for strong positive effects but also for futility, when accumulating evidence suggests the effect is too small to justify continuing.

If futility rules are included, they must be defined in advance, just like efficacy boundaries.

These can’t be reactive decisions.

5. Operationally feasible, but governance-intensive

Compared to fully sequential testing, group sequential designs are far more practical in many real-world environments. At the same time, compared to fixed-sample testing, they demand greater organizational discipline.

Interim analyses often involve formal review processes, careful sharing of results, and clear communication about what interim findings do and do not imply.

For example, early results from the coverage explanation experiment may be shared selectively to avoid premature adjustments to messaging or stakeholder expectations that could influence subsequent user behavior.

An example of group sequential testing in digital healthcare

Consider a digital healthcare organization testing a redesigned insurance coverage explanation flow inside its patient portal. The redesign aims to improve the percentage of patients who fully review their benefits before booking an appointment (thereby reducing confusion, billing disputes, and downstream support burden).

Suppose the team determines that detecting a meaningful improvement requires a maximum of 10,000 patient sessions. Rather than waiting until all 10,000 sessions are collected, they pre-specify interim analyses at 25%, 50%, 75%, and 100% of the planned sample:

- 2500 sessions (first interim analysis)

- 5000 sessions (second interim analysis)

- 7500 sessions (third interim analysis)

- 10000 sessions (final analysis)

These groups don’t need to be equal in size. What matters is that the analysis schedule (including the target sample sizes or information levels at each look) is defined before the experiment begins.

At each interim analysis, the team evaluates whether the redesigned experience meaningfully improves benefit-review completion compared to the existing experience. At any interim analysis, the experiment may:

- Stop early because evidence is strong enough to conclude that the redesigned coverage explanation improves completion.

- Stop early because accumulating evidence suggests a meaningful improvement is unlikely to emerge.

- Continue to the next planned analysis.

For example, if the redesign crosses the pre-defined statistical boundary at the 5,000-session look, the team can confidently roll it out without waiting for all 10,000 sessions.

What distinguishes Group Sequential Testing is not simply that results are reviewed mid-experiment, but that interim decision-making is intentionally built into the design while preserving statistical validity.

Rather than framing experimentation as a single end-of-test verdict, Group Sequential Testing treats it as a sequence of planned evaluation opportunities that enable earlier learning without sacrificing rigor.

Understanding this structured form of sequential decision-making makes it easier to place Group Sequential Testing in context alongside fully sequential approaches, which operate at the opposite end of the monitoring spectrum.

Learn more about how Kameleoon can support your organization with such adaptive experimentation and more.

Learn more about how Kameleoon can support your organization with such adaptive experimentation and more.